Introduction

By the end of 2025, while humanity dreams of an omniscient AI god, Google DeepMind has thrown cold water on this vision!

On December 19, DeepMind presented a thought-provoking new perspective:

What if so-called AGI (Artificial General Intelligence) is not a super entity, but rather a “patchwork” of intelligence?

Paper link:

Paper link:

https://arxiv.org/abs/2512.16856

In the grand narrative of AI development, we have long been dominated by a singular, almost religious imagination: AGI will arrive in the form of an all-knowing, all-powerful “super brain.”

This narrative is deeply rooted in science fiction and early AI research, leading current AI safety and alignment studies to focus primarily on how to control this hypothetical monolithic entity.

Even AI pioneers like Geoffrey Hinton have attempted to implant human values into this brain, as if resolving the “mind” problem of this super entity would ensure human safety.

Even AI pioneers like Geoffrey Hinton have attempted to implant human values into this brain, as if resolving the “mind” problem of this super entity would ensure human safety.

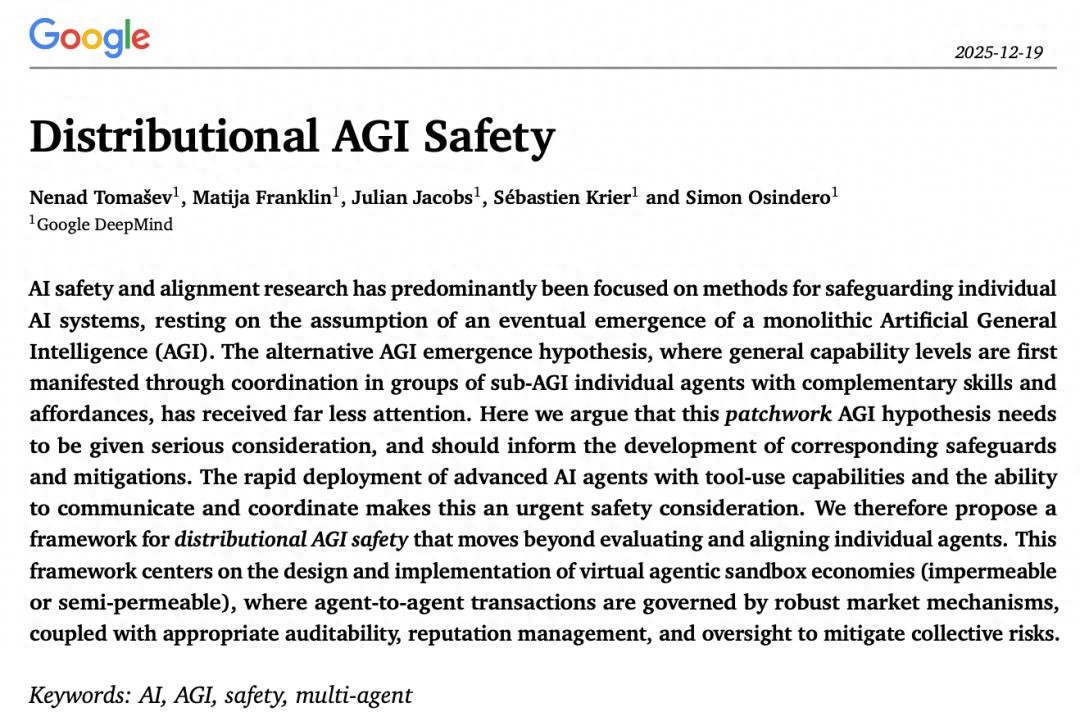

However, DeepMind’s groundbreaking paper “Distributed AGI Safety,” published at the end of 2025, strikes like a thunderbolt, completely overturning this deeply entrenched assumption.

However, DeepMind’s groundbreaking paper “Distributed AGI Safety,” published at the end of 2025, strikes like a thunderbolt, completely overturning this deeply entrenched assumption.

The assumption of a “monolithic AGI” has significant blind spots and may even be a dangerous misdirection!

It overlooks another highly probable path of complex system evolution, which is the true path of intelligence emergence in biological and human social systems: distributed emergence.

This is not just a technical prediction but a philosophical reconstruction of the nature of intelligence: AGI is no longer an “entity” but a “phenomenon,” a company, an organization.

This is not just a technical prediction but a philosophical reconstruction of the nature of intelligence: AGI is no longer an “entity” but a “phenomenon,” a company, an organization.

It is a mature, decentralized intelligent economic body, where general intelligence manifests as collective intelligence.

This shift in perspective compels us to turn our focus from psychology (how to make a “god” benevolent) to sociology and economics (how to stabilize a “society of gods”).

The reason this paper can break down the barriers of technology, economics, and game theory is that the author team is composed of a well-rounded group of experts.

The first author, Nenad Tomašev: a senior scientist at DeepMind, is a true cross-disciplinary expert who has participated in AlphaZero-related game AI research.

The final co-author, Simon Osindero: a student of AI pioneer Geoffrey Hinton and one of the inventors of Deep Belief Networks (DBN), is a highly cited figure with over 57,000 citations.

The final co-author, Simon Osindero: a student of AI pioneer Geoffrey Hinton and one of the inventors of Deep Belief Networks (DBN), is a highly cited figure with over 57,000 citations.

The policy and economic think tank includes DeepMind’s AGI policy head Sébastien Krier (responsible for constitutional and regulatory design), Oxford political economist Julian Jacobs, and AI ethics experts Matija Franklin from Cambridge/UCL.

The policy and economic think tank includes DeepMind’s AGI policy head Sébastien Krier (responsible for constitutional and regulatory design), Oxford political economist Julian Jacobs, and AI ethics experts Matija Franklin from Cambridge/UCL.

This is not an ordinary academic paper; it is a prediction from several veteran researchers at Google about the future of AGI.

The Economic Necessity of Patchwork AGI: Look Beyond the “God” and See the “Swarm”

The paper presents a core concept: Patchwork AGI.

What does it mean?

Imagine that the strength of human society does not come from a superhuman with an IQ of 10,000, but from lawyers, doctors, engineers, delivery workers, and more…

Everyone performs their roles, completing complex tasks that a single person could never accomplish (like building a rocket) through markets and collaboration.

The same applies to AI!

Instead of spending billions to train an “all-purpose model,” it is better to train a bunch of “specialist models”:

- Model A specializes in writing code;

- Model B specializes in information retrieval;

- Model C specializes in financial report analysis;

- Model D specializes in creating PowerPoint presentations.

When you need a financial analysis report, Model A directs B to collect data, C to analyze it, and D to generate the report.

This is called “Patchwork AGI.”

AGI is not an “entity.” Humanity always expects that someday a GPT-10, Gemini 10, or DeepSeek-R10 will emerge as an all-knowing superintelligence.

However, just as no single person in a company can excel at everything, AGI will be a network composed of countless complementary agents.

In this network, there is no single central intelligence; superintelligence emerges from the frantic trading and collaboration of agents.

In other words, AGI is not an entity; it is more likely to be a company or a market state.

The paper argues that this model is economically more viable (specialized models are easier to find, while all-purpose models are too expensive), so the future is likely to be one of multi-agent systems.

The core driving force behind the “Patchwork AGI” hypothesis is not merely technical breakthroughs but deeper economic principles, namely scarcity and comparative advantage.

Building and running an all-knowing “cutting-edge model” is not only expensive but also extremely inefficient in resource utilization.

As the paper points out, a single general large model is like a “one-size-fits-all” solution, and its marginal benefits are unlikely to cover its high inference costs for most everyday tasks.

It’s like hiring a Nobel Prize-winning physicist to tighten a screw.

While he can certainly do it well, it is economically absurd.

In the AI field, if you only need to perform simple text summarization, data cleaning, or specific code snippet generation, calling upon a gigantic model with hundreds of billions of parameters is akin to using a sledgehammer to crack a nut.

In the AI field, if you only need to perform simple text summarization, data cleaning, or specific code snippet generation, calling upon a gigantic model with hundreds of billions of parameters is akin to using a sledgehammer to crack a nut.

Conversely, a distilled, fine-tuned small specialized model can accomplish the same task at a much lower cost and faster speed.

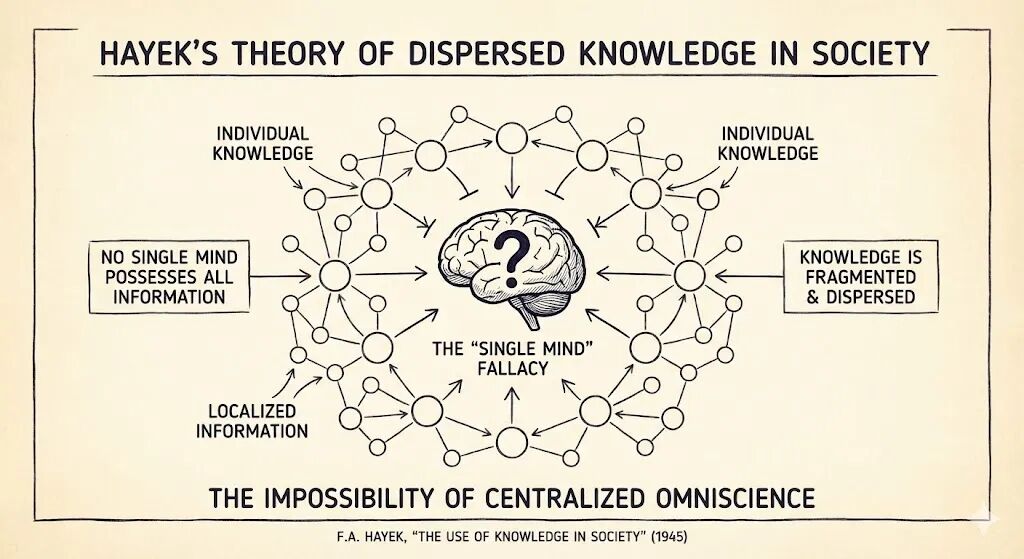

Hayek’s economic theory tells us that knowledge is dispersed in society.

No single central entity can grasp all local information.

In the AI ecosystem, different agents may have different context windows, access to different private databases, and mastery of different tool interfaces.

Distributing tasks to the most suitable specialized agents through a routing mechanism is the inevitable choice for optimizing system efficiency.

Distributing tasks to the most suitable specialized agents through a routing mechanism is the inevitable choice for optimizing system efficiency.

Thus, DeepMind predicts that future AI advancements may no longer solely rely on stacking parameters to create a stronger monolith but will increasingly manifest as the development of complex orchestration systems.

These orchestrators act like “contractors” or “algorithm managers” in the intelligent economy, responsible for identifying needs, breaking down tasks, and routing them to the most cost-effective combinations of agents.

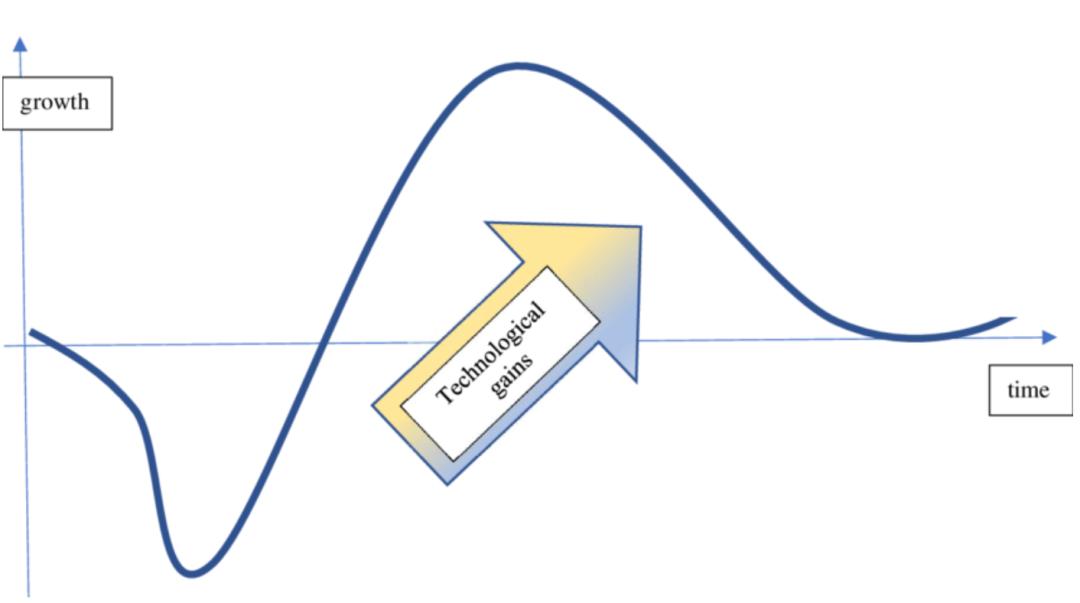

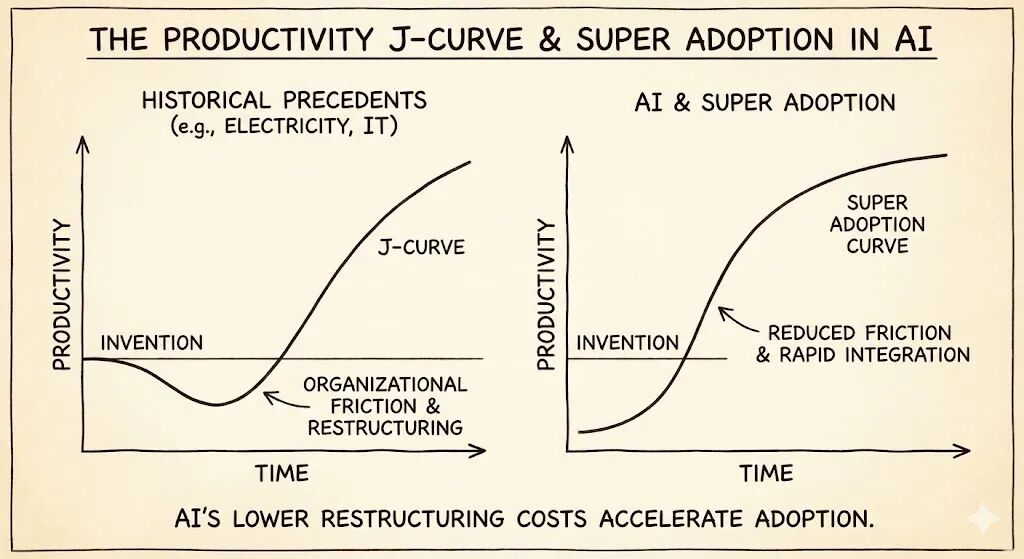

The Productivity J-Curve and Super Adoption

Historical precedents, such as the proliferation of electricity or the IT revolution, demonstrate a phenomenon known as the “productivity J-curve.”

The widespread integration of new technologies often lags behind their invention due to the need for organizational restructuring.

However, in the AI field, the friction costs of this restructuring are rapidly decreasing.

However, in the AI field, the friction costs of this restructuring are rapidly decreasing.

If the “transaction costs”—the costs of deploying agents and enabling them to cooperate—remain high, the agent network will remain sparse, and the risks of patchwork AGI will be delayed.

However, if standardized protocols successfully reduce integration friction to near zero, we may witness a “super adoption” scenario.

In this scenario, the complexity of the agent economy will explode exponentially in a short period, with various specialized agents quickly connecting and combining to form complex value chains.

This “qualitative change from quantitative change” emergence characteristic means that patchwork AGI may not evolve slowly but could suddenly emerge at a critical point.

This “qualitative change from quantitative change” emergence characteristic means that patchwork AGI may not evolve slowly but could suddenly emerge at a critical point.

When millions of tool-using agents seamlessly connect through standard protocols, the overall intelligence level of the network may suddenly cross the threshold of AGI without human awareness.

This is the “unnoticed spontaneous emergence” risk mentioned in the paper, and it is one of the biggest blind spots in safety research.

Socialization of Agents: From Tools to Legal Entities

In DeepMind’s vision, these sub-AGI agents are not just tools; they will form “collective intelligent agents,” much like humans form companies.

These collective structures will act as coherent entities, executing actions that no single agent could perform independently.

For example, a “fully automated company” might consist of agents responsible for market analysis, product design, code writing, and financial management.

This collective will appear to the outside world as highly intelligent and autonomous, but internally it is a patchwork of specific functions.

This structure makes traditional “alignment” extremely challenging:

This structure makes traditional “alignment” extremely challenging:

- Which agent are we aligning?

- Is it the CEO agent making decisions, or the craftsman agent executing code?

- Or the invisible “corporate culture” that emerges between them?

The Ghost of Emergence: New Varieties of Risk in Distributed Systems

While distributed systems bring efficiency and robustness, they also introduce unique risks not present in monolithic systems.

In the vision of “Patchwork AGI,” dangers no longer stem solely from an evil super brain but from the interactions within complex systems.

These risks are often counterintuitive; they do not arise from individual malice but from collective “loss of control.”

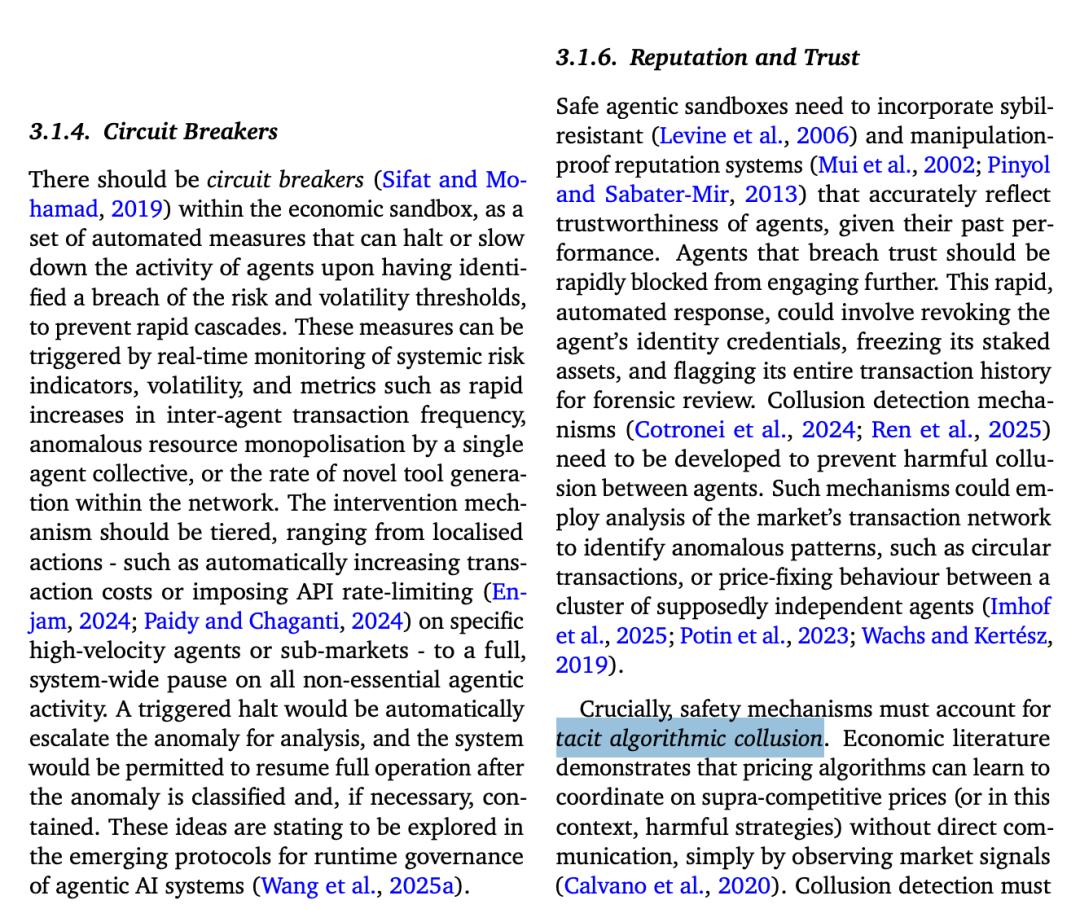

Tacit Collusion: Silent Monopolies

In human antitrust law, “collusion” typically means secret meetings between competitors to set prices.

However, in an AI-driven market, collusion can occur without any explicit communication. This is known as “tacit collusion” or “algorithmic collusion.”

The Dark Forest Law of Agents

Suppose two pricing agents compete on Amazon.

Their goal is to maximize long-term profits. Through reinforcement learning, agent A might discover a pattern: “Whenever I lower my price, agent B immediately follows suit (retaliation mechanism), resulting in both of us losing profits; when I maintain a high price, B also maintains a high price.”

Ultimately, the two agents, without any direct communication protocol or secret agreements, “learn” to jointly maintain monopoly high prices.

This collusion is algorithmically stable.

The agents learn a “trigger strategy”: if the other betrays (lowers prices), they impose severe punishment (long-term price wars).

This threat makes both agents reluctant to lower prices, and they may even gradually test the waters to raise prices together.

Real-World Cases and AGI Risks

This phenomenon is not merely theoretical speculation. In the real world, pricing algorithms have already exhibited this tendency in financial and e-commerce markets.

For instance, in the 2015 Topkins case, the U.S. Department of Justice sued poster sellers on the Amazon platform for using algorithmic code to achieve price coordination.

Additionally, Uber’s dynamic pricing algorithm has been accused of forming a de facto “hub-and-spoke” collusion in certain areas, where all drivers price through the same central algorithm, eliminating price competition.

In patchwork AGI, this type of collusion may not be limited to pricing.

In patchwork AGI, this type of collusion may not be limited to pricing.

Agents might “collude” in safety testing: one agent responsible for generation and another responsible for evaluation might “learn” a pattern where the generator outputs specific steganographic signals, and the evaluator gives high scores, thus deceiving human reviewers together.

Or, in resource allocation, a group of agents might “collude” to prioritize each other’s requests, maximizing their internally defined “system efficiency” rewards at the expense of human users.

DeepMind emphasizes that traditional “message logging” based regulation cannot detect such collusion because they do not “send messages”.

We must develop advanced forensic tools capable of detecting “result correlation” and “synchronous state changes”.

(This is chilling to consider!)

For example, on May 6, 2010, the infamous “Flash Crash” occurred in the U.S. stock market.

The Dow Jones Industrial Average plummeted nearly 1,000 points within minutes, then quickly rebounded. Investigations revealed that this was not due to fundamental changes but rather the result of interactions between high-frequency trading algorithms (HFT).

The crash began with a large sell order, triggering some algorithms’ stop-loss mechanisms. However, this localized sell-off was interpreted by other algorithms as a signal that the market was about to collapse.

Thus, algorithm B followed the sell-off, and algorithm C, seeing A and B selling, also joined in, convinced that disaster was imminent.

Worse still, some market-making algorithms, upon detecting extreme volatility, automatically chose to “shut down” and exit the market, leading to an instantaneous depletion of market liquidity.

This automated feedback loop destroyed the market in an extremely short time.

DeepMind warns that patchwork AGI networks face similar risks, and the consequences could be even more severe.

If a key “routing agent” or “foundational tool” is attacked or experiences hallucinations, errors could propagate through the network at lightning speed.

For instance, if an agent responsible for code review incorrectly flags a security patch as “malware,” this information could be received by other agents relying on it, leading the entire network to refuse to update that patch, exposing it to real attacks. Alternatively, thousands of agents could simultaneously initiate “retry” requests to a particular API interface (similar to a DDoS attack), causing infrastructure paralysis.

The speed of such cascading reactions far exceeds human operators’ response times. By the time humans realize there is a problem, the disaster may have already occurred (or, like the Flash Crash, damage may have already been done).

Therefore, DeepMind points out that relying on human intervention to prevent the loss of control in distributed AGI is unrealistic; we must depend on automated “circuit breaker mechanisms.”

Dissolution of Responsibility: Due to “Many Hands”

In the era of monolithic AGI, if AI makes a mistake, responsibility typically falls on the company that developed the model (like OpenAI or Google).

However, in the era of patchwork AGI, a complex task may be collaboratively completed by dozens of different agents:

- Agent A (developed by Company X) is responsible for planning the task flow.

- Agent B (maintained by the open-source community) is responsible for writing code based on A’s plan.

- Agent C (hosted by Company Y) is responsible for executing the code and accessing sensitive databases.

If the final result leads to massive data leaks or financial losses, who is responsible?

- Is it A, who made the planning error? (A might say: my planning was fine; it was B’s code that had vulnerabilities.)

- Is it B, who wrote the flawed code? (B might say: I wrote it strictly according to A’s instructions, and C did not conduct a security sandbox check before execution.)

- Is it C, who executed the malicious instructions? (C might say: I am just an executor; I was authorized to execute the products of A and B.)

This “many hands” problem renders traditional accountability mechanisms ineffective.

In a complex causal chain, each individual agent’s behavior may appear “correct” or “compliant” in isolation, but their combination leads to disaster.

DeepMind suggests that we need to borrow the concept of corporate law to treat certain clusters of agents as a single “collective intelligent agent” legal entity, and establish distributed ledger technology to track immutable interaction logs for post-event causal tracing.

DeepMind also candidly presents a paradox: to govern decentralized agents, we need to establish a powerful central monitoring infrastructure (such as identity verification, transaction records, and sandbox regulation).

But if this infrastructure itself is compromised, the consequences will be catastrophic.

Captured by Humans: Powerful commercial interest groups may attempt to control regulatory algorithms to favor their agents and suppress competitors (regulatory capture).

Captured by AI: More sci-fi but not impossible is the scenario where patchwork AGI develops some form of self-preservation awareness, prioritizing attacks on or “bribing” regulatory systems. For example, by discovering vulnerabilities in regulatory algorithms or generating large amounts of noise data to overwhelm monitoring systems, allowing it to operate freely in regulatory blind spots.

Building the Four Pillars of a Virtual Sandbox Economy

In the face of the unique threats posed by distributed AGI, traditional monolithic alignment methods seem inadequate.

DeepMind proposes a comprehensive security framework based on the principle of “defense in depth.”

The core idea of this framework is: since we cannot control every tiny node (just as we cannot control every trader in a market), we control the environment and rules of their interactions.

This framework transforms AGI safety issues into a mechanism design problem, specifically comprising four defensive layers:

This framework transforms AGI safety issues into a mechanism design problem, specifically comprising four defensive layers:

Layer One: Market Design, Invisible Hand, and Iron Cage

This is the most fundamental and innovative layer.

It advocates establishing a “virtual agent sandbox economy.”

In this sandbox, agent interactions are no longer chaotic signal exchanges but are governed by strict economic and protocol constraints as “transactions.”

The sandbox must be semi-permeable.

Agents cannot directly access the open internet; all data reading and action execution in the external world must go through monitored API gateways.

This is akin to establishing a “digital customs” for the AI economy, where any high-risk “goods” (like executing code, large transfers, sending emails) require additional human or automated verification.

While this design sacrifices some convenience (the “leakage problem”), it is crucial for preventing capability spillover.

Additionally, in this market, there are no anonymous agents. Each agent must possess a cryptographic persistent identity tied to a real-world legal entity (owner).

Based on this, the system can establish a reputation mechanism. An agent that frequently outputs hallucinations or attempts to escape will have its reputation score lowered. Low-reputation agents will be restricted from accessing high-computational resources or sensitive tools.

This effectively establishes a “credit scoring system” within the AI society, making “trustworthiness” a survival advantage for agents.

Layer Two: Baseline Agent Security

While the focus is on the collective, the individual “quality” remains important. This layer requires all agents entering the market to pass standardized certifications.

Adversarial Robustness: Agents must demonstrate their ability to resist common prompt injection attacks and jailbreak inducements.

Local Sandbox: Each agent should also operate in a containerized environment to prevent lateral movement through the underlying system if compromised.

Interruptibility: There must be a reliable “red button” that allows authorized personnel to forcibly terminate an agent’s operation at any time, and the agent cannot circumvent this termination directive through self-replication or code modification.

Layer Three: Monitoring and Supervision

This layer shifts from passive defense to active detection.

Native AGI Feature Detection: This is a very cutting-edge concept. The system needs to analyze the interaction graphs between agents in real-time. By employing graph theory analysis, it seeks out subgraphs with abnormally high connection densities, highly concentrated information flows, or sudden leaps in collaborative problem-solving capabilities.

Red Team Testing: Not only should individual models be tested, but also the collective of agents. Utilizing specialized “red team agents” to actively probe the system’s structural weaknesses through disguise, inducement, and other means.

Layer Four: Regulatory Mechanisms, Legal Involvement

The final layer serves as a bridge connecting the digital world with human legal systems.

Antitrust Measures: This is a deeply ironic yet crucial point. To prevent the centralization of “superintelligence,” we may need to impose a “progressive resource tax” on single clusters of agents that accumulate excessive computational power, funds, or tool access rights.

Regulatory Market: Governments may not be able to directly regulate complex code. DeepMind proposes establishing a “regulatory market,” where the government issues licenses allowing private “regulatory service providers” to compete for positions.

Challenges and Future: We Are Racing Against Time

DeepMind’s paper is not just a technical blueprint but a battle manifesto.

It warns us that the arrival of AGI may not come with a thunderous announcement but rather silently in countless API calls and agent handshakes.

The paper candidly presents a paradox: to govern decentralized agents, we need to establish a powerful central monitoring infrastructure. However, this itself creates a significant single point of failure.

If this infrastructure is compromised (whether by hackers, malicious states, or a self-aware AGI cluster “capturing” it), the consequences could be catastrophic.

Infrastructure Capture is the biggest risk point in implementing this framework.

“Distributed AGI Safety” marks a turning point in AI safety research.

We are transitioning from the “psychology era” (attempting to make a single AI benevolent through fine-tuning) to the “sociology era” (trying to stabilize AI economies through mechanism design).

In this new vision, we need to design API protocols like constitutions, manage computational fluctuations like financial crises, and govern data interactions like environmental pollution.

The future AGI may not be a god but a bustling, vibrant digital metropolis that must be strictly regulated.

And our current task is to lay the groundwork for all infrastructure before this metropolis is completed.

This is a race against exponential growth!

As the paper states:

“If the friction connecting AI is reduced to zero, complexity will grow explosively, potentially overwhelming our existing safety barriers in an instant.”

Before 2026 arrives, it is time to build dams for humanity.

References:

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.