Introduction

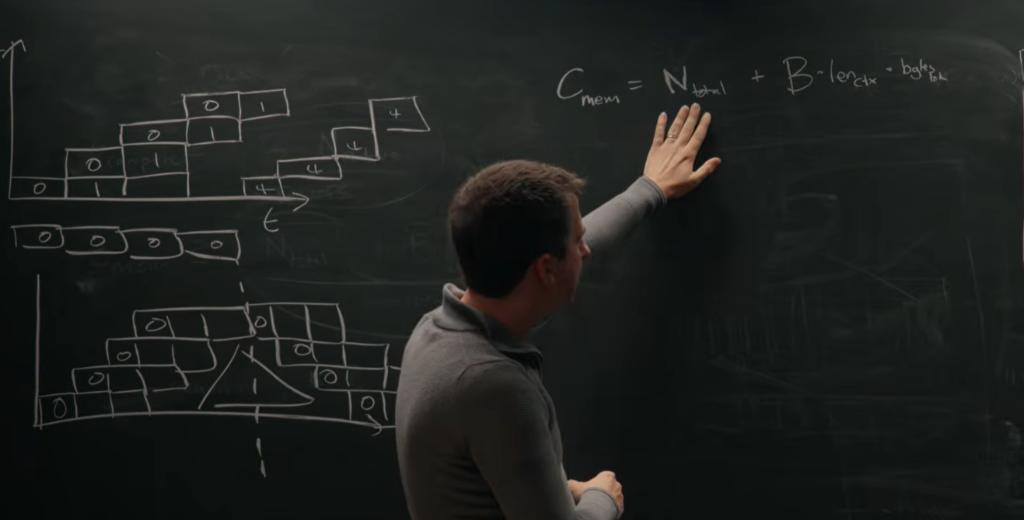

Chip engineer Reiner Pope breaks down the training and reasoning logic behind GPT-5, Claude, and Gemini using a blackboard and equations. In a recent podcast, he shared insights with Dwarkesh Patel, highlighting the architecture details inferred from public API pricing.

Key Insights

Pope’s core conclusions include:

- Without batch processing user requests, the cost of single inference can be 1000 times higher.

- The pre-training data volume for GPT-5 is 100 times the theoretical optimal solution.

- DeepSeek V3 has 256 experts, activating only a small portion (32) during each inference.

- The MoE (Mixture of Experts) architecture is limited to 72 GPUs per rack, which is a significant physical bottleneck for model scaling.

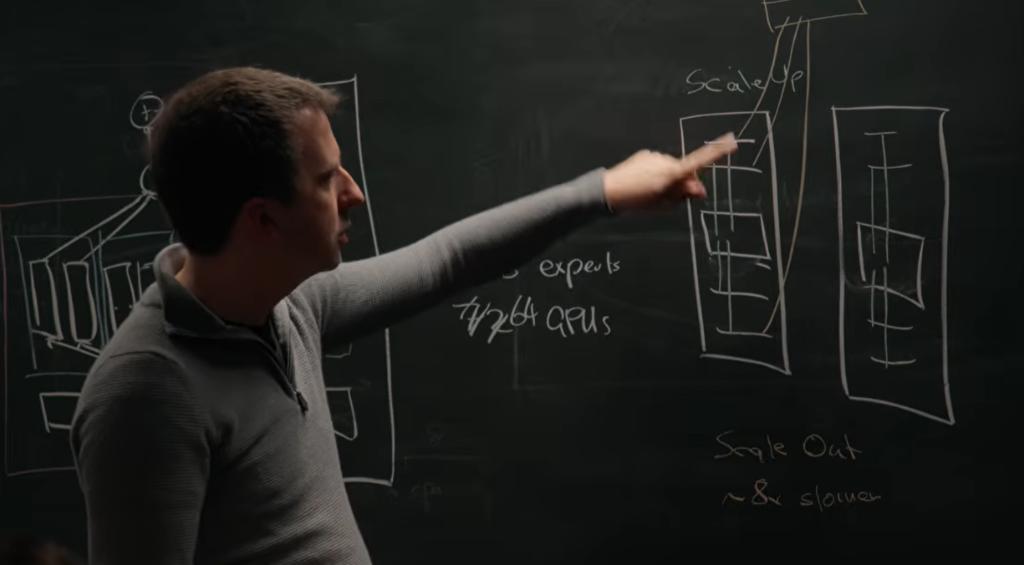

The Impact of GPU Racks on Model Size

To understand why top models are structured the way they are, we must start with hardware. Modern large models run inference on GPU clusters. The NVIDIA Blackwell NVL72 is the current mainstream deployment form, with 72 GPUs connected via NVLink for high-speed communication.

However, communication speed drops by 8 times when crossing racks, which directly limits the deployment of MoE models.

Pope explains that DeepSeek V3 operates with 256 experts, activating only a fraction (32) during inference. The most efficient deployment is to use “expert parallelism,” where different experts are placed on different GPUs. This configuration matches the NVLink topology perfectly.

Yet, when experts are distributed across two racks, half of the tokens must traverse the slower network, creating a bottleneck. This explains why Gemini appears to have achieved pre-training success earlier than others; Google’s TPU system has a larger scale-up domain, allowing for more efficient all-to-all communication.

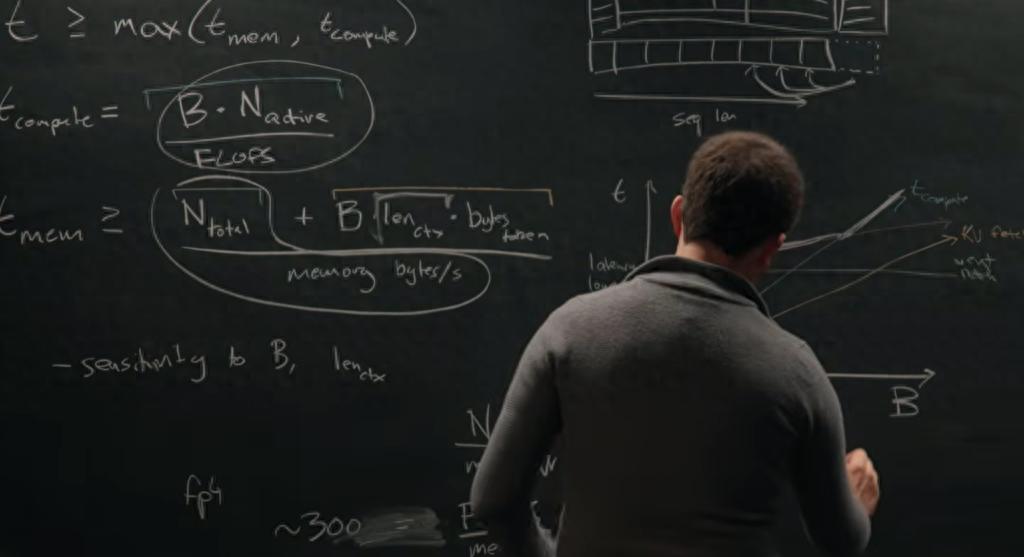

The Secret of Batch Processing

The interview also discussed a common market phenomenon: products like Claude and Codex offer a “fast mode” that costs 6 times more but only speeds up processing by 2.5 times. Pope clarifies that the key variable is batch size.

He likens inference to a train schedule, where each batch can carry a certain number of passengers (users). The unit cost of inference is extremely high with small batch sizes but drops dramatically as batch size increases.

Pope estimates that without batch processing, costs could be 1000 times higher. The optimal batch size is approximately 300 times the model’s sparsity, leading to around 2400 concurrent sequences for models like DeepSeek that activate 1/8 of the experts.

Thus, using a “slow mode” to reduce costs mathematically doesn’t work because KV caches (which store user history) cannot be shared across users, meaning that waiting does not significantly lower costs.

Inferring Model Architecture from API Pricing

Pope demonstrates a fascinating inference process: internal architecture parameters can be deduced from public API pricing.

Clue 1: Price Increase at 200,000 Tokens

Gemini raises its price by 50% after 200,000 tokens. Pope explains that this corresponds to the point where KV cache memory bandwidth costs exceed weight matrix computation costs, marking a shift from a “computation bottleneck” to a “memory bandwidth bottleneck.”

Clue 2: Output Tokens Cost More

Most models charge 3-5 times more for output tokens than for input tokens. This is due to the efficiency of processing large batches during the prefill phase compared to generating one token at a time during decoding, which is limited by memory bandwidth.

Clue 3: Cache Hits are Cheaper

API pricing often discounts cache hits significantly. Pope explains that this reflects the cost differences of storing KV caches across different memory levels, with re-computation being much more expensive than direct reads from memory.

Overtraining in GPT-5

One of the most shocking estimates from the talk is that GPT-5’s pre-training data volume is about 100 times greater than the optimal training amount. Pope notes that when the costs of pre-training, reinforcement learning training, and inference are roughly equal, overall efficiency is maximized.

Assuming a model has an inference flow of about 50 million tokens per second and a lifespan of about 2 months, the total inference token count is approximately 200 trillion. The optimal solution based on around 100 billion active parameters is about 20 trillion tokens, leading to a ratio of 100 times.

Pope emphasizes that the amount spent on serving users should roughly equal the amount spent on training, or else money is wasted.

Pipeline Parallelism: Limited Value

Regarding pipeline parallelism, Pope concludes that while it saves memory capacity, it does not resolve the KV cache issue, making it of limited value in inference scenarios.

Convergent Evolution of Neural Networks and Cryptography

In the final part of the interview, Pope discusses his blog post on the convergent evolution between neural network architectures and cryptographic protocols. Both aim to mix input information throughout the system, but with opposite goals: cryptography seeks to obscure structure, while neural networks aim to discover it.

Pope cites the Feistel network as a specific case of technology transfer, which has been adapted into neural networks to form RevNets, allowing for more efficient memory usage during training.

This contrasts with KV caching, which trades memory for computation, a strategy that is often beneficial under current hardware conditions.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.